How LLMs Really Work (And How It Affects Your Content Strategy)

SEO Processes

How LLMs Really Work (And How It Affects Your Content Strategy)

When you’re trying to create optimised content, large language models like Google AI Mode and ChatGPT can feel like a black box. If content is your bread and butter, you’ll probably struggle to wrap your head around the deepest technical complexities of LLMs.

Here’s the good news: You don’t need a computer science degree to kick-start a generative engine optimisation (GEO) strategy. This guide will fill you in on the things you really need to know about LLMs, and how to create LLM-optimised SEO content that increases visibility for your brand.

I’ll examine three key functions of LLMs and explain how you can take advantage of them when writing your content. Find out what you need to know to implement effective LLM SEO strategies for Google AI Overviews, AI Mode, ChatGPT and more.

Key takeaways

- Content chunking: When retrieving text, LLMs prefer to look at chunks of around 400 words. Chunks should be self-contained, sit within an appropriate context and tackle opportunities for LLM personalisation.

- Natural language processing (NLP): To interpret human language, LLMs must apply numerical values to words through NLP. LLMs draw on knowledge graphs to support NLP. Techniques like semantic triples and statistics addition allow you to leverage NLP for generative engine optimisation.

- Query fan-out: LLMs run many sub-searches to deliver more detailed responses and draw on an online consensus. This system also helps LLMs to personalise outputs for users. Your content strategy must reflect fan-out queries, not just initial prompts.

1. Content chunking

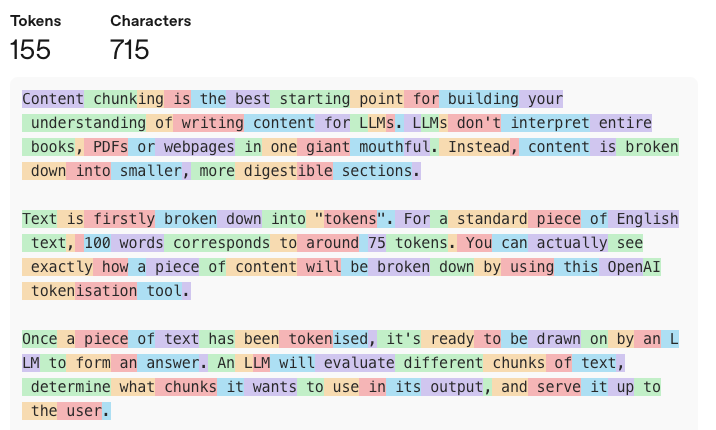

Content chunking is the best starting point for building your understanding of writing content for LLMs. LLMs don’t interpret entire books, PDFs or webpages in one giant mouthful. Instead, content is broken down into smaller, more digestible sections.

Text is firstly broken down into “tokens”. For a standard piece of English text, 100 words corresponds to around 75 tokens. You can actually see exactly how a piece of content will be broken down by using this OpenAI tokenisation tool.

Once a piece of text has been tokenised, it’s ready to be drawn on by an LLM to form an answer. An LLM will evaluate different chunks of text, determine what chunks it wants to use in its output, and serve it up to the user.

Chunks that LLMs will evaluate for answer inclusion can vary in size. Based on the way that most models have been trained, approximately 512 tokens (~400 words) is a good, rough number to keep in mind for chunk length. For simpler queries, much shorter chunks may be more readily reviewed.

What does this really mean?

Well, optimising content for organic visibility is no longer (just) about taking your web page and putting it up against another site’s.

Instead, you need to start thinking about taking your chunk of text and ensuring it’s superior to your competitors’.

And it’s well worth taking the time to get this right. According to one 2024 study, up to 15% of text produced by LLMs is made up of snippets that can be found verbatim online. If you can create the chunk that an LLM wants to use, you can exercise a serious amount of control over the narrative around your business and your industry.

Creating a great content chunk

How do you create a perfect content chunk? This is where LLM optimisation and traditional search engine optimisation really do overlap. It’s all about being concise and succinct while providing as much genuinely valuable information to the reader as possible.

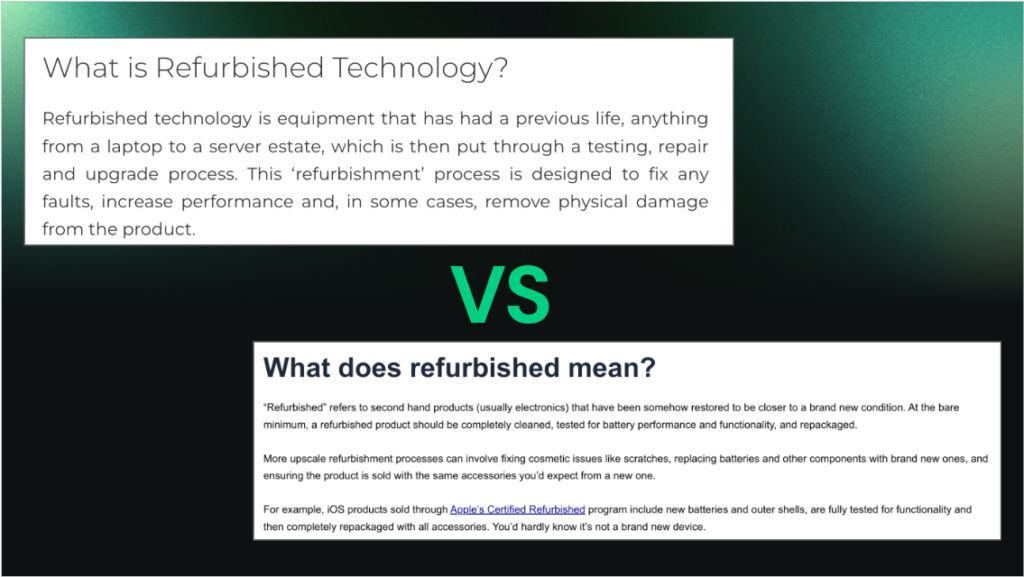

Consider the following examples:

Example 1: “ChatGPT is a generative AI chatbot released by OpenAI in November 2022. Using large language models, it can respond to user prompts through image and speech. In October 2025, OpenAI claimed that ChatGPT had 800 million weekly users. While there’s some scepticism about ChatGPT’s metric reporting and long-term outlook, it’s doubtless one of the most impactful technological innovations of the 21st Century.”

…versus…

Example 2: “When it comes time to ponder the most influential and innovative technologies of the 21st Century, one piece of software comes to mind: ChatGPT. OpenAI let this product loose in November 2022 and quickly made an impact. It seems like everyone and their dog is using it in an array of transformative ways. There are major question marks about how accurate and transparent OpenAI is in letting the world peek behind the curtain when it comes to reporting metrics. However, there’s no denying that this phenomenal product will be making all kinds of waves for many years and even decades to come. And who knows how much longer after that?”

No prizes for picking example one as the better option when it comes to LLM optimisation. It’s clearer, more succinct and the actual information is more densely packed into the chunk. It’s much easier for an LLM to dig up its insights and work them into an answer.

Parent document retrieval

None of this is to say that the web page around your chunks is irrelevant. It’s still important because of a concept known as parent document retrieval (PDR). PDR means an LLM’s retrieval system will analyse the page or text around a content chunk to ensure it’s being used in an appropriate context.

To maximise retrievability, focus on your page’s heading structure. Your headings should clearly place a chunk in a relevant context. The chunks directly below your headings should ideally provide valuable, dense information relating to the subject.

Another great way to leverage parent document retrieval is to use key takeaways sections like the one in this very blog. Key takeaways don’t just serve up the most valuable insights of your content in an easily digestible way for human readers. They also help ensure that all of your content chunks are placed in an appropriate context when looked at by LLMs.

Optimising for parent document retrieval supports greater visibility in LLMs and AI search engines, while also bolstering traditional SEO.

Chunks as a content strategy

Understanding content chunking doesn’t just provide guidelines for your approach to writing content. It can actually inform your strategy itself.

Content chunking means you don’t need to produce an entire webpage to achieve AI visibility for a certain prompt. As long as you can find an appropriate position for it on your website, you can simply create a chunk that’s optimised for a query.

This approach also makes it easy to key in on more specific user intents. Now, if you’re running a website for an SEO agency, you don’t need to create entire pages targeting “best SEO agency for plumbers” and “best SEO agency for start-ups. Much smaller content chunks can be enough to earn some high-value visibility and traffic.

2. Natural language processing

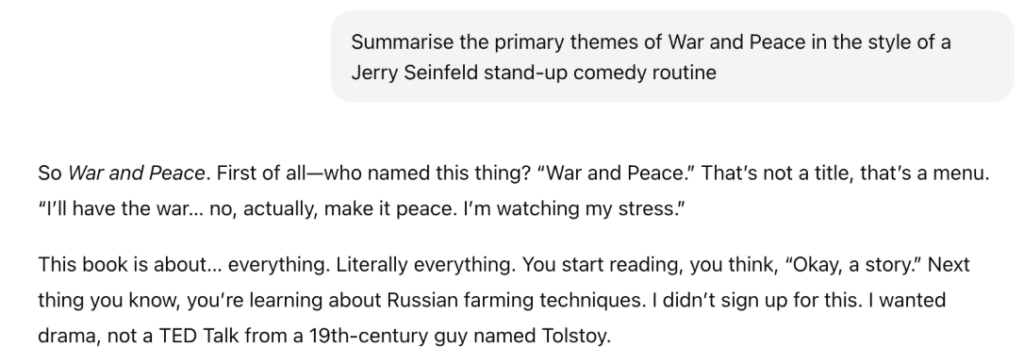

At this point, we’re all pretty well-adjusted to the things LLMs can do. But seriously, how is it possible to have this interaction with a piece of computer software?

The answer is natural language processing (NLP). It’s how computers comprehend human language and formulate responses.

How does it work? Here’s a (highly simplified) breakdown:

- Tokenisation: As we’ve covered, LLMs break text down into individual tokens. 100 words are usually around 75 tokens.

- Feature extraction: Tokens are converted into numerical values. This is necessary because computers can only naturally understand numbers, not words.

- Semantic spacing: LLMs are able to more deeply understand how different words and entities relate to each other. They do this by building semantic “maps”, where very similar entities are given very similar numerical values.

- Named-entity recognition: LLMs identify people, things, places and events in a sentence. This is how they know that “War and Peace” refers to an 1869 book, not the individual concepts of “war” and “peace”.

- Dependency parsing: The system analyses the grammar of a sentence to understand how the words relate to each other.

This is an incredibly complex process with plenty of room for error. It also contributes to the massive costs that AI companies are struggling to grapple with. The best way you can leverage this process is to make it as easy as possible for LLMs to understand your content.

Here are a few tactics to consider:

- Semantic triples: Writing in semantic triples means using an extremely simple structure of [subject], [predicate], [object], i.e. “Prosperity Media is an SEO agency”. This language mirrors semantic data structuring used by computers, making it very easy for LLMs to digest.

- Entity-based claims: Link claims about your brand to specific entities. Rather than talking about being a “high-quality SEO agency”, talk about having an “APAC Search Award-winning SEO agency”. This is a much more authoritative statement in the eyes of an LLM.

- Numbers and statistics: Remember that numbers are the natural language of an LLM. So, it’s more straightforward for a system to understand that headphones have an “18-hour” battery life as opposed to a “long-lasting” life. The same goes for statistics. According to a study from 2024, statistics addition can improve GEO performance by 33.9%.

Knowledge graphs

The basic process of NLP only gets an LLM so far. Gaining a deeper understanding of entities like your brand requires systems to rely on more complex functions to store knowledge.

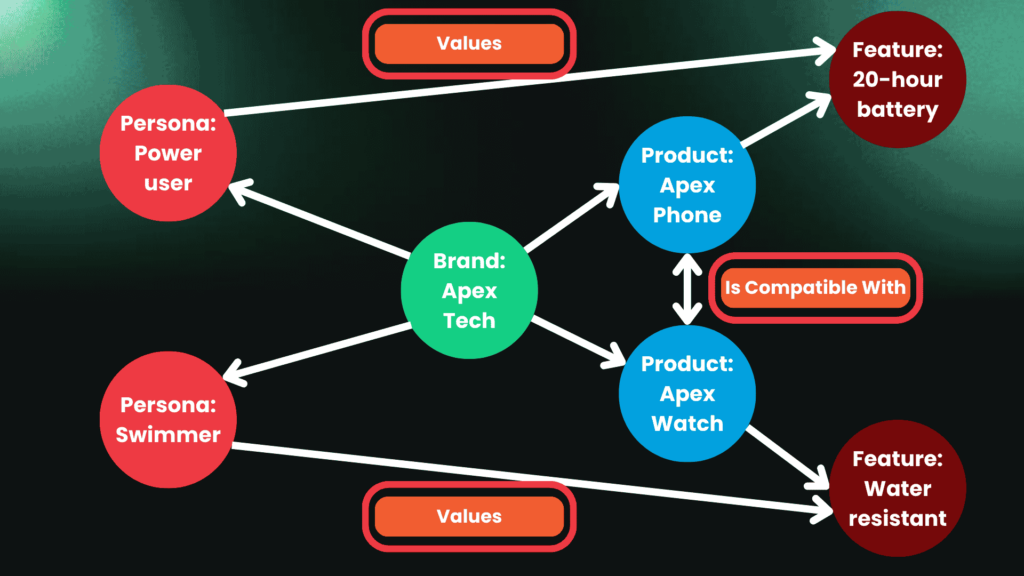

The easiest way to visualise this is as a knowledge graph. You might know knowledge graphs as the engine behind Google Knowledge Panels. Similar technology is at work to help LLMs function effectively.

Here’s what a knowledge graph might look like for your brand:

In the centre of the graph is your brand. Around it, you have products, product features and personas for your brand services. There are pretty clear connections to be drawn linking these graph nodes to your brand.

But pay attention to those extra connections linking the nodes to each other. For example, the compatibility of the Apex Phone and Apex Watch, and the fact that the persona of a swimmer values the Apex Watch’s water resistance.

Making these connections on a brand’s knowledge graph can supercharge answer engine optimisation strategies. If a customer asks about a phone and watch combination with great compatibility features, having content specifically addressing this on its website makes Apex a better candidate for inclusion.

Want to develop on-site and off-site GEO strategies that feed the right information to LLMs? Get in touch with Prosperity Media and find out how we can help you achieve better AI search visibility.

3. Query Fan-Out

LLMs are able to offer highly detailed responses to queries. Ask an LLM about the best running shoes to buy, and you’ll probably see lists of shoes in a range of categories, tips for finding the right shoe, and more information helping you on your buying journey.

LLMs can achieve this level of detail through query fan-out. When an LLM seeks to answer a query that requires it to look outside its training data, it begins a process of retrieval-augmented generation (RAG). It scours the internet looking for sources to assist it in formulating an answer.

As part of this process, it relies on query fan-out. This means that it isn’t just searching a single query across the internet. It actually breaks queries down into a number of sub-queries. In the case of a query about “best running shoes”, the query fan-out process might look like this:

You can use tools like Hall to see fan-out queries that are relevant for your brand. You can then create content chunks and pages, aiming to insert your brand into those conversations and drive more visibility.

Off-site GEO

Query fan-out also necessitates an off-site GEO strategy. You need to find out which websites other than your own are being used to inform LLMs about your brand and industry.

Semrush released a major study on this very subject in November 2025. According to their findings, Reddit is the single most cited domain across ChatGPT, Google AI Mode and Perplexity. It’s followed by LinkedIn, Wikipedia, Medium and YouTube.

Apart from Wikipedia, these websites present low barriers for use by your brand. This gives you the chance to insert your business into more conversations. Tools like Ahrefs let you see the exact sites LLMs source for selected prompts, allowing you to get highly relevant options for your brand.

Meanwhile, 2026 research from Wix found that listicles are the most-cited content type by those same three AI platforms. They account for 21.9% of all citations.

Heightened personalisation

This process allows LLMs to modify queries to align them with the information they know about users. All major LLMs have unique ways of personalising outputs for users, from chat memories to connections to related social media accounts.

If the LLM you’re using knows you’re a marathon runner, it can adjust fan-out queries to find more relevant sources and deliver a better output. As a brand, you have to keep up with the in-depth level of knowledge LLMs have about your potential customers.

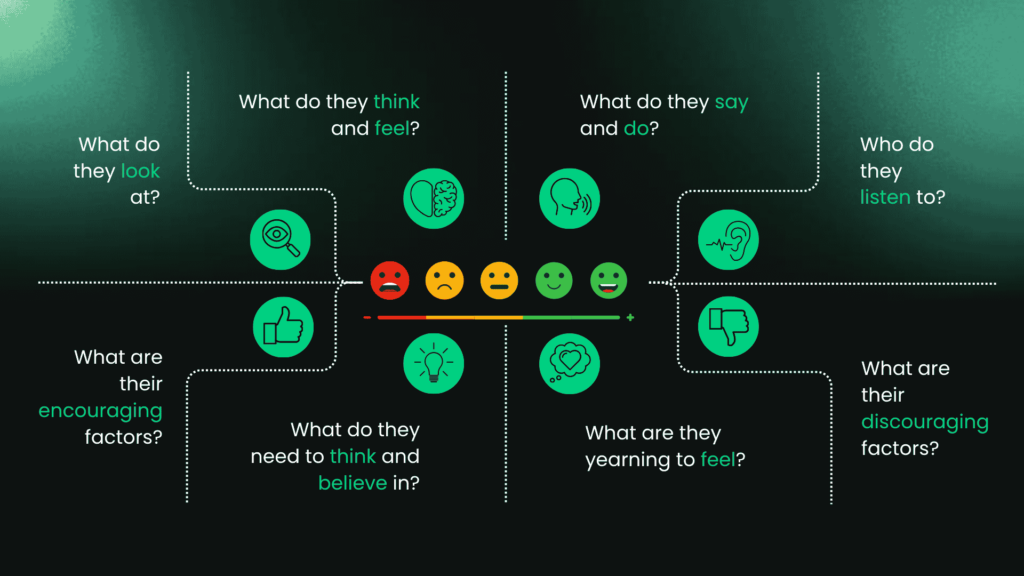

The content team at Prosperity Media uses empathy maps to ensure we have a deep understanding of the audiences we write for.

With steps like these, we can keep up with the knowledge LLMs have about their users and produce content that meets the system where it’s at. Get this process right, and you can ensure AI search brings in seriously qualified leads for your business. That’s how we built our GEO content strategy for GO Rentals, driving a 450.95% ROI from LLM traffic in just six months.

Want to hear some extra detail about these subjects? I discussed all of the above and more in my talk at the Sydney SEO Conference 2026. Check out my full presentation below:

Keen for more insights? You can also download my 15-step checklist to kickstart your GEO content strategy.

Download The Full 15-Step Checklist Here

Hopefully, I’ve given you a few things to think about when it comes to creating great GEO content strategies. If you’re ready to earn more AI visibility and start controlling conversations about your brand, book your free consultation call today.

ChatGPT

ChatGPT

Claude

Claude

Perplexity

Perplexity

Grok

Grok

Google AI

Google AI

You

You